I did not put a lot of thought into choosing distkey and sortkey values for this test but it certainly seems as though choosing these correctly could have a dramatic impact to the speed of queries. These queries hit significantly less data and as the data quantity falls any latency becomes an increasingly large proportion of the whole.

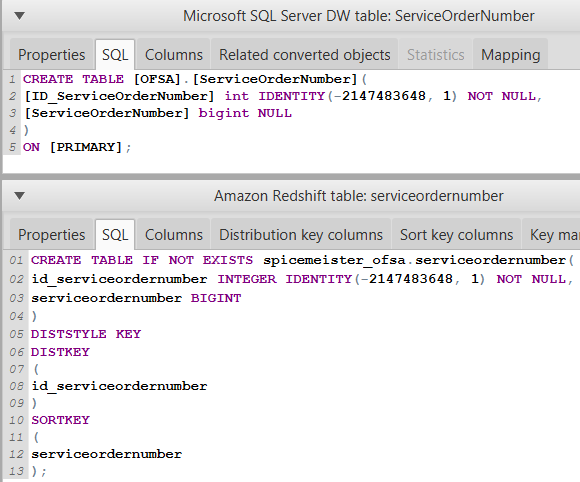

That speed drops for the 4th and 5th records is, I think, more to do with some latency in query execution, rather like we saw in the previous tests. And yet, the speed difference between row 2 (which presumably sees no benefit from either setting) and row 3 (enhanced just by the sortkey) is dramatic: almost a 6-fold speed increase! The filters are either based on time (the sortkey) or category, an item attribute which is not part of either sortkey or distkey. I don't think I'm benefiting from the distkey at all in this test set as I set the distkey to be storeid and none of these filters are store-based. So with this in mind let's look at the results table again. (More details on selecting a sortkey here) I could have used multiple fields in the sortkey but chose to get started with just 1, the week identifier in the fact table and associated calendar master table, periodid. Redshift uses this information to optimize query plans and will (hopefully) skip past entire sections of data that are not within the filter. Sortkeydefines how records will be sorted on each node. I did not add the same distkey to the store master table, but as that is small, just a few hundred records, copying it between nodes to make a join should not be especially impactful. Note that this would primarily help with faster joins. (More details om selecting a distkey here). Redshift will try to put records with the same storid on the same node. In this instance I chose to spread it out based on the store identifier (storeid). Remember that Redshift is running on a cluster of processing nodes, not just one machine.ĭistkey defines how the data in this fact table should be spread across the multiple nodes in the cluster. Note that Redshift doesn't use indexes or partitions as I am used to seeing them in relational databases so, in many ways, table definition is a lot simpler. For each query I am summarizing 5 facts from the main fact table, joining to each of the master tables and using a variety of filters to restrict the records I want to aggregate over.

Test setupįor this test, I am using the same database as before (simulated Point of Sale data at item-store-week level with item, store and calendar master tables) on 4 'dw1.xlarge' AWS nodes. In this follow-up post then, let's look at how Redshift performs when we want to aggregate across particular records. In this initial testing I was aggregating the entire fact table to get comparable tests to the previous benchmark, but that's typically not how a reporting (or analytic) system would access the data. RedShift performed very well indeed, especially so as the number of facts returned by the queries increased. In my recent post on "The right tools (structured) BIG DATA handling", I looked at using AWS Redshift to generate summaries from a large fact table and compared it to previous benchmark results using a columnar database on a fast, SSD drive.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed